Nvidia AI Ports 3,000-Cell GPU Library Overnight

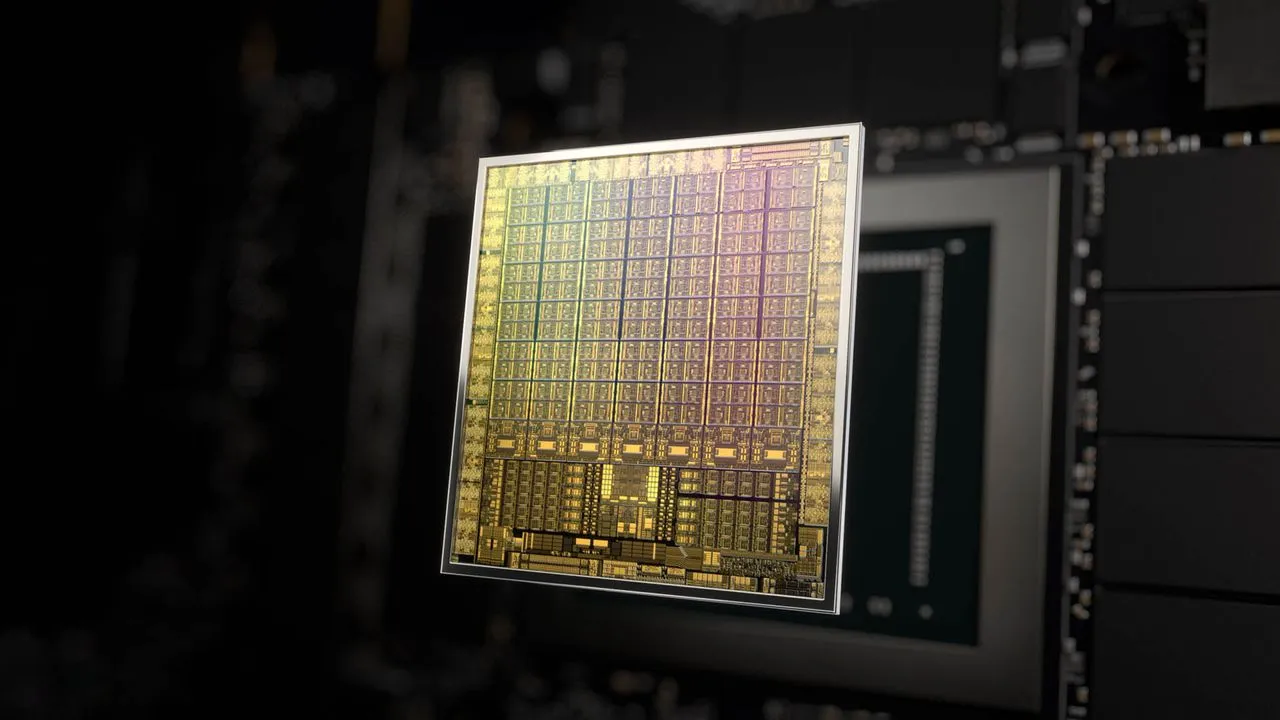

Nvidia uses reinforcement learning in NVCell to port its 2,500-3,000 cell GPU library to new nodes overnight on one GPU, beating human timelines. This speeds next-gen GPU development amid surging AI chip demands.

The library holds 2,500 to 3,000 pre-designed cells for GPU components like texture units. Previously, eight engineers spent 10 months adapting it to new TSMC process nodes. Nvidia's NVCell tool, now at version 2 or 3, generates designs that match or exceed human results in cell size, power, and delay.

NVCell builds on years of development, with Dally offering updated cell counts. Nvidia also deploys AI for floorplanning via prefix reinforcement learning, tackling placement challenges. Its F model, an executable GPU representation shared with TSMC, targets verification bottlenecks in design.

Reader-supported

The Circuitry is a passion project I've always wanted to build, and I love the work behind it.

Running it costs real money. APIs, hosting, time. To keep improving the site and growing this into something useful for everyone, those costs have to be covered.

Any contribution is appreciated. If not, no pressure. Thanks for reading.